This article explores the basic concepts behind character encoding and then takes a dive deeper into the technical details of encoding systems.

If you have just a basic knowledge of character encoding and want to better understand the essentials, the differences between encoding systems, why we sometimes end up with nonsense text, and the principles behind different encoding system architecture, then read on.

Getting to understand character encoding in detail requires some extensive reading and a good chunk of time. I’ve tried to save you some of that effort by bringing it all together in one place while providing what I believe to be a pretty thorough background of the topic.

I’m going to go over how single-byte encodings (ASCII, Windows-1251 etc.) work, the history of how Unicode came to be, the Unicode-based encodings UTF-8, UTF-16 and how they differ, the specific features, compatibility, and lack thereof among various encodings, character encoding principles, and a practical guide to how characters are encoded and decoded.

While character encoding may not be a cutting edge topic, it’s useful to understand how it works now and how it worked in the past without spending a lot of time.

History of Unicode

I think it’s best to start our story from the time when computers were nowhere near as advanced nor as commonplace a part of our lives as they are now. Developers and engineers trying to come up with standards at the time didn’t have any idea that computers and the internet would be as hugely popular and pervasive as they did. When that happened, the world needed character encodings.

But how could you have a computer store characters or letters when it only understood ones and zeros? Out of this need emerged the first 1-byte ASCII encoding, which while not necessarily the first encoding, was the most widely used and set the benchmark. So it’s a good standard to use.

But what is ASCII? The ASCII code consists of 8 bits. Some easy arithmetic shows that this character set contains 256 symbols (eight bits, zeros and ones 2⁸=256).

The first 7-bits — 128 symbols (2⁷=128) in the set were used for Latin letters, control characters (such as hard line breaks, tabs and so on) and grammatical symbols. The other bits were for national languages. This way the first 128 characters are always the same, and if you’d like to encode your native language then help yourself to the remaining symbols.

This gave rise to a panoply of national encodings. You end up with a situation like this: say you’re in Russia creating a text file which by default will use Windows-1251 (the Russian encoding used in Windows). And you send your document to someone outside Russia, say in the US. Even if the recipient knows Russian they’re going to be out of luck when they open the document on their computer (with word processing software using ASCII as the default code) because they’ll see bizarre garbled characters (mojibake) instead of Russian letters. More precisely, any English letters will appear just fine, because the first 128 symbols in Windows-1251 and ASCII are identical, but wherever there is Russian text our recipient’s word processing software will use the wrong encoding unless the user has manually set the right character encoding.

The problem with national character code standards is obvious. And eventually, these national codes began to multiply, the internet began to explode, and everyone wanted to write in his or her national language without producing these indecipherable mojibakes.

There were two options at this point — use an encoding for each country or create a universal character map to represent all characters on the planet.

A Short Primer On ASCII

It may seem overly elementary, but if we’re going to be thorough we have to cover all the bases.

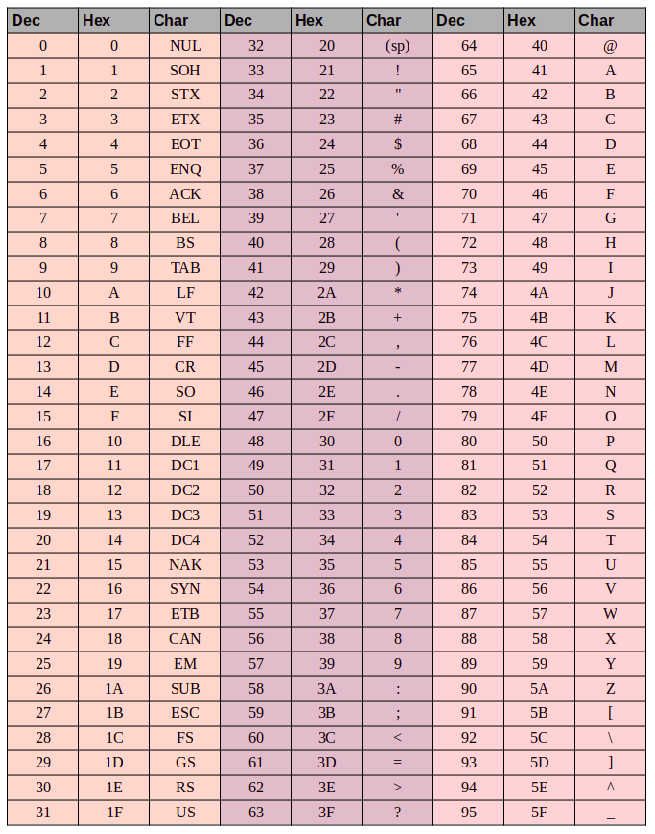

There’re 3 groups of columns in the ASCII table:

- the decimal value of the character

- the hexadecimal value of the character

- the glyph for the character itself

Let’s say we want to encode the word “ok” in ASCII. The letter “o” has a decimal value of 111 and 6F in hexadecimal. In binary that would be — 01101111. The letter “k” is position 107 in decimal and 6B in hex, or — 01101011 in binary. So the word “OK” in ASCII would look like 01101111 01101011. The decoding process would be the opposite. We start with 8 bits, translate them into decimal encoding and end up with the character number, and search the table for the corresponding symbol.

Unicode

From the above, it should be pretty obvious why a single common character map was needed. But what would it look like? The answer is Unicode which is actually not an encoding, but a character set. It consists of 1,114,112 positions, or code points, most of which are still empty, so it isn’t likely the set will need to be expanded.

The Unicode standard consists of 17 planes with 65,536 code points each. Each plane contains a group of symbols. Plane zero is the basic multilingual plane where we find the most commonly used characters in all modern alphabets. The second plane contains characters from dead languages. There are even two planes reserved for private use. Most planes are still empty.

Unicode has code points for 0 through 10FFFF (in hexadecimal).

Characters are encoded in hexadecimal format preceded by a “U+”. So, for example, the first basic plane includes characters U+0000 to U+FFFF (0 to 65,535), and block 17 contains U+100000 through U+10FFFF (1,048,576 to 1,114,111).

So now instead of a menagerie of numerous encodings, we have an all-encompassing table that encodes all symbols and characters which we might ever need. But it is not without its faults. While each character was previously encoded by one byte, it can now be encoded using different numbers of bytes. For example, you used to only need one byte to encode all of the letters in the English alphabet. For example, the Latin letter “o” in Unicode is U+006F. In other words, the same number as in ASCII — 6F in hexadecimal and 111 in binary. But to encode the symbol “U+103D5” (the Persian number “100”), we need 103D5 in hex and 66,517 in decimal, and now we need three bytes.

This complexity must be addressed by such Unicode encodings like UTF-8 and UTF-16. And further on we’ll have a look at them.

UTF-8

UTF-8 is a Unicode encoding of the variable-width encoding system which can be used for displaying any Unicode symbol.

What do we mean when we speak about variable-width? First of all, we need to understand that the structural (atomic) unit in encoding is a byte. Variable-width encoding means that one character can be encoded using different numbers of units, or bytes. For example, Latin letters are encoded with one byte, and Cyrillic letters with two.

Before we move on, a slight aside regarding the compatibility between ASCII and UTF.

The fact that Latin letters and key control characters such as line breaks, tab stops, etc. contain one byte makes UTF-encoding compatible with ASCII. In other words, Latin script and control characters are found in the exact same code points in ASCII and UTF and are encoded using one byte in both, and are therefore backward compatible.

Let’s use the letter “o” from our ASCII example from earlier. Recall that its position in the ASCII table is 111, or 01101111 in binary. In the Unicode table, it’s U+006F, or 01101111. And now since UTF is a variable-width encoding system “o” would be one byte. In other words, “o” would be represented the same way in both. And the same for characters 0 — 128. So, if your document contains English letters you wouldn’t notice a difference if you opened it using UTF-8, UTF-16, or ASCII, and would only notice a difference if you started working with national encodings.

Let’s look at how the mixed English/Russian phrase “Hello мир” would appear in three different encoding systems: Windows-1251 (Russian encoding), ISO-8859-1 (encoding system for Western European languages), UTF-8 (Unicode). This example is telling because we have a phrase in two different languages.

Now let’s consider how these encoding systems work and how we can translate a line of text from one encoding to another, and what happens if the characters are displayed improperly, or if we simply can’t do this due to the differences in the systems.

Let’s assume that our original phrase was written with Windows-1251 encoding. When we look at the table above we can see by translating from decimal or hex to decimal that we get the below coding in binary using Windows-1251.

01001000 01100101 01101100 01101100 01101111 00100000 11101100 11101000 11110000

So now we have the phrase “Hello мир” in Windows-1251 encoding.

Now imagine that we have a text file but we don’t know what encoding system the text was saved in. We assume it’s encoded in ISO-8859-1 and open it in our word processor using this encoding system. As we saw earlier, some of the characters appear just fine, as they exist in this encoding system, and are even in the same code points, but the characters in the Russian word «мир» don’t work out quite as well. These characters don’t exist in the encoding system, and in their places, or code points, in ISO-8859-1 we find completely different characters. So “м” is code point 236, “и” is 232, and “р” is 240. But in ISO-8859-1 these code points correspond to “ì” (236), “è” (232), and “ð” (240).

So, our mixed-language phrase “Hello мир” encoded in Windows-1251 and read in ISO-8859-1 will look like “Hello ìèð”. We have partial compatibility and we can’t display a phrase encoded in one system properly in the other, because the symbols we need simply don’t exist in the second encoding.

We need a Unicode encoding — in our case, we’ll use UTF-8 as an example. We’ve already discussed that characters can take between 1 to 4 bytes in UTF-8, but another advantage is that UTF, unlike the two prior encoding systems, isn’t restricted to 256 symbols, but contains all symbols in the Unicode character set.

It works something like this: the first bit of each encoded character corresponds not to the glyph or symbol itself, but to a specific byte. So, if the first bit is zero, we know that the encoded symbol uses just one byte — which makes the set backward compatible with ASCII. If we look closely at the ASCII symbol table we see that the first 128 symbols (the English alphabet, control characters, and punctuation marks) are expressed in binary, they all begin with a bit value of 0 (note that if you translate characters into binary using an online converter or anything similar the first zero high-order bit may be discarded, which can be a bit confusing).

01001000 — the first-bit value is 0, so 1 byte encodes 1 character -> “H”.

01100101 — the first-bit value is 0, so 1 byte encodes 1 character-> “e”.

If the value of the first bit is not zero, the symbol will be encoded in several bytes.

A two-byte encoding will have 110 for the first three bit values.

11010000 10111100 — the marker bits are 110 and 10, so we use 2 bytes to encode 1 character. The second byte in this case always starts with “10.” So we omit the control bits (the lead bits that are highlighted in red and green) and look at the remainder of the code (10000111100), and convert to hex (043С) -> U+043C which gives us the Russian “м” in Unicode.

The initial bits for a three-byte character are 1110.

11101000 10000111 101010101 — we add up all of the bits except the control bits and we find that in hex we have 103В5, U+103D5 — the ancient Persian number one hundred (10000001111010101).

Four-byte character encodings begin with the lead bits 11110.

11110100 10001111 10111111 10111111 — U+10FFFF which is the last available character in the Unicode set (100001111111111111111).

Now, we can easily write our multi-language phrase in UTF-8 encoding.

UTF-16

UTF-16 is another variable-width encoding. The main difference between UTF-16 and UTF-8 is that UTF-16 uses 2 bytes (16 bits) per code unit instead of 1 bye (8 bits). In other words, any Unicode character encoded in UTF-16 can be either two or four bytes. To keep things simple, I will refer to these two bytes as a code unit. So, in UTF-16 any character can be represented using either one or two code units.

Let’s start with symbols encoded using one code unit. We can easily calculate that there are 65,535 (216) characters with one code unit, which lines up completely with the basic multilingual plane of Unicode. All characters in this plane will be represented by one code unit (two bytes) in UTF-16.

Latin letter “o” — 00000000 01101111.

Cyrillic letter “М” — 00000100 00011100.

Now let’s consider characters outside the basic multilingual plane. These require two code units (4 bytes) and are encoded in a slightly more complicated way.

First, we need to define the concept of a surrogate pair. A surrogate pair is two code units used to encode a single character (totaling 4 bytes). The Unicode character set reserves a special range D800 to DFFF for surrogate pairs. This means that when converting a surrogate pair to bytes in hexadecimal, we end up with a code point in this range which is a surrogate pair rather than a separate character.

To encode a symbol in the range 10000 — 10FFFF (i.e., characters that require more than one code unit) we proceed as follows:

Subtract 10000(hex) from the code point (this is the lowest code point in the range 10000 — 10FFFF).

We end up with up to a 20-bit number no greater than FFFF.

The high-order 10 bits we end up with are added to D800 (the lowest code point in the surrogate pair range in Unicode).

The next 10 bits are added to DC00 (also from the surrogate pair range).

Next, we end up with 2 surrogate 16-bit code units, the first 6 bits of which define the unit as part of a surrogate pair.

The tenth bit in each surrogate defines the order of the pair. If we have a “1” it’s the leading or high surrogate, and if we have a “0” it’s the trailing or low surrogate.

This will make a bit more sense when illustrated with the below example.

Let’s encode and then decode the Persian number one hundred (U+103D5):

103D5 — 10000 = 3D5.

3D5 = 0000000000 1111010101 (the high 10 bits are zero, and when converted to hexadecimal we end up with “0” (the first ten), and 3D5 (the second ten)).

0 + D800 = D800 (1101100000000000) the first 6 bits tell us that this code point falls in the surrogate pair range, the tenth bit (from the right) has a “0” value, so this is the high surrogate.

3D5 + DC00 = DFD5 (1101111111010101) the first 6 bits tell us that this code point falls in the surrogate pair range, the tenth bit (from the right) is a “1”, so we know this is the low surrogate.

The resulting character encoded in UTF-16 looks like — 1101100000000000 1101111111010101.

Now let’s decode the character. Let’s say we have the following code point — 1101100000100010 1101111010001000:

We convert to hexadecimal = D822 DE88 (both code points fall in the surrogate pair range, so we know we’re dealing with a surrogate pair).

1101100000100010 — the tenth bit (from the right) is a “0”, so this is the high surrogate.

1101111010001000 — the tenth bit (from the right) is a “1”, so this is the low surrogate.

We ignore the 6 bits identifying this is as a surrogate and are left with 0000100010 1010001000 (8A88).

We add 10000 (the lowest code point in the surrogate range) 8A88 + 10000 = 18A88.

We look at the Unicode table for U+18A88 = Tangut Component-649.

Kudos to everyone who read this far!

I hope this has been informative without leaving you too bored.

You might also find useful:

The Unicode character set

Strategies for content localization: IP- or browser-based

About the translator

Alconost is a global provider of localization services for apps, games, videos, and websites into 70+ languages. We offer translations by native-speaking linguists, linguistic testing, cloud-based workflow, continuous localization, project management 24/7, and work with any format of string resources. We also make advertising and educational videos and images, teasers, explainers, and trailers for Google Play and the App Store.