PrivateGPT — это проект, который расширяет возможности работы LLM-моделей, позволяя добавлять неограниченное количество личных данных.

31 октября 2023 AMD Radeon предоставила поддержку PyTorch для любительских видеокарт. Полный список видеокарт и ОС можно посмотреть здесь. Описанная инструкция протестирована на AMD Radeon RX 7900 XTX.

Для запуска нам понадобится Ubuntu с установленными: git, make, docker и ROCm.

Установим ROCm по инструкции:

sudo apt update sudo apt install "linux-headers-$(uname -r)" "linux-modules-extra-$(uname -r)" sudo usermod -a -G render,video $LOGNAME # Add the current user to the render and video groups wget https://repo.radeon.com/amdgpu-install/6.2.4/ubuntu/noble/amdgpu-install_6.2.60204-1_all.deb sudo apt install ./amdgpu-install_6.2.60204-1_all.deb sudo apt update sudo apt install amdgpu-dkms rocm sudo reboot

Установим rocminfo:

sudo apt install rocminfo # Необходимо изменить PATH в файле /etc/environment добавив ":/opt/rocm/bin"

Скопируем проект позволяющий настроить и запустить PrivateGPT в docker контейнере:

git clone https://github.com/HardAndHeavy/private-gpt-rocm-docker cd private-gpt-rocm-docker

Создадим файл настроек PrivateGPT (settings.yaml) с помощью команды make gen. На все заданные вопросы можно просто нажать кнопку Ввод. Тогда файл настроек примет значение по умолчанию:

Языковая модель [IlyaGusev/saiga_mistral_7b_gguf]: Файл языковой модели [model-q8_0.gguf]: Языковая модель для блоков [mistralai/Mistral-7B-Instruct-v0.2]: Модель встраивания [intfloat/multilingual-e5-large]:

В результате появится файл settings-gpt.yaml, который в последующем можно донастроить при глубоком изучении PrivateGPT.

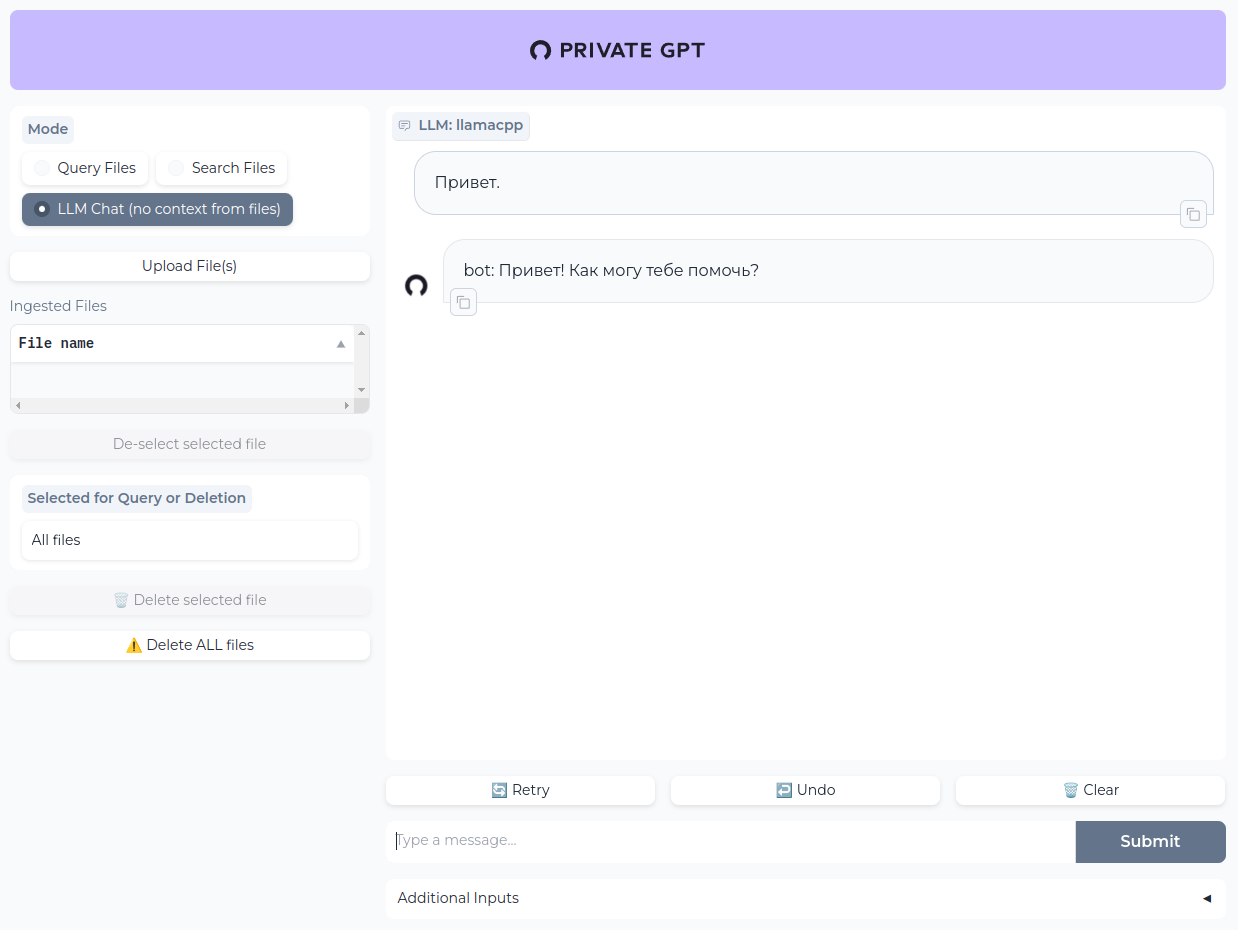

Запустим PrivateGPT с помощью команды make run. При первом запуске будет происходить длительный процесс скачивания модели. Когда этот процесс завершится, PrivateGPT станет доступен по адресу http://localhost.

Лог процесса запуска

docker run -it --rm \ -p 80:8080 \ -v ./local_data/:/app/local_data \ -v ./models/:/app/models \ -e PGPT_PROFILES=gpt \ -v ./settings-gpt.yaml:/app/settings-gpt.yaml \ --device=/dev/kfd \ --device=/dev/dri \ hardandheavy/private-gpt-rocm:latest 10:47:50.938 [INFO ] private_gpt.settings.settings_loader - Starting application with profiles=['default', 'gpt'] Downloading embedding intfloat/multilingual-e5-large Fetching 19 files: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 19/19 [00:01<00:00, 17.22it/s] Embedding model downloaded! Downloading LLM model-q8_0.gguf LLM model downloaded! Downloading tokenizer mistralai/Mistral-7B-Instruct-v0.2 Tokenizer downloaded! Setup done poetry run python -m private_gpt 10:47:54.978 [INFO ] private_gpt.settings.settings_loader - Starting application with profiles=['default', 'gpt'] 10:48:00.396 [INFO ] matplotlib.font_manager - generated new fontManager 10:48:01.914 [INFO ] private_gpt.components.llm.llm_component - Initializing the LLM in mode=llamacpp ggml_init_cublas: GGML_CUDA_FORCE_MMQ: no ggml_init_cublas: CUDA_USE_TENSOR_CORES: yes ggml_init_cublas: found 1 ROCm devices: Device 0: Radeon RX 7900 XTX, compute capability 11.0, VMM: no llama_model_loader: loaded meta data with 21 key-value pairs and 291 tensors from /app/models/model-q8_0.gguf (version GGUF V2) llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output. llama_model_loader: - kv 0: general.architecture str = llama llama_model_loader: - kv 1: general.name str = models llama_model_loader: - kv 2: llama.context_length u32 = 32768 llama_model_loader: - kv 3: llama.embedding_length u32 = 4096 llama_model_loader: - kv 4: llama.block_count u32 = 32 llama_model_loader: - kv 5: llama.feed_forward_length u32 = 14336 llama_model_loader: - kv 6: llama.rope.dimension_count u32 = 128 llama_model_loader: - kv 7: llama.attention.head_count u32 = 32 llama_model_loader: - kv 8: llama.attention.head_count_kv u32 = 8 llama_model_loader: - kv 9: llama.attention.layer_norm_rms_epsilon f32 = 0.000010 llama_model_loader: - kv 10: llama.rope.freq_base f32 = 10000.000000 llama_model_loader: - kv 11: general.file_type u32 = 7 llama_model_loader: - kv 12: tokenizer.ggml.model str = llama llama_model_loader: - kv 13: tokenizer.ggml.tokens arr[str,32002] = ["<unk>", "<s>", "</s>", "<0x00>", "<... llama_model_loader: - kv 14: tokenizer.ggml.scores arr[f32,32002] = [0.000000, 0.000000, 0.000000, 0.0000... llama_model_loader: - kv llama_model_loader: - kv 16: tokenizer.ggml.bos_token_id u32 = 1 llama_model_loader: - kv 17: tokenizer.ggml.eos_token_id u32 = 2 llama_model_loader: - kv 18: tokenizer.ggml.unknown_token_id u32 = 0 llama_model_loader: - kv 19: tokenizer.ggml.padding_token_id u32 = 0 llama_model_loader: - kv 20: general.quantization_version u32 = 2 llama_model_loader: - type f32: 65 tensors llama_model_loader: - type q8_0: 226 tensors llm_load_vocab: special tokens definition check successful ( 261/32002 ). llm_load_print_meta: format = GGUF V2 llm_load_print_meta: arch = llama llm_load_print_meta: vocab type = SPM llm_load_print_meta: n_vocab = 32002 llm_load_print_meta: n_merges = 0 llm_load_print_meta: n_ctx_train = 32768 llm_load_print_meta: n_embd = 4096 llm_load_print_meta: n_head = 32 llm_load_print_meta: n_head_kv = 8 llm_load_print_meta: n_layer = 32 llm_load_print_meta: n_rot = 128 llm_load_print_meta: n_embd_head_k = 128 llm_load_print_meta: n_embd_head_v = 128 llm_load_print_meta: n_gqa = 4 llm_load_print_meta: n_embd_k_gqa = 1024 llm_load_print_meta: n_embd_v_gqa = 1024 llm_load_print_meta: f_norm_eps = 0.0e+00 llm_load_print_meta: f_norm_rms_eps = 1.0e-05 llm_load_print_meta: f_clamp_kqv = 0.0e+00 llm_load_print_meta: f_max_alibi_bias = 0.0e+00 llm_load_print_meta: n_ff = 14336 llm_load_print_meta: n_expert = 0 llm_load_print_meta: n_expert_used = 0 llm_load_print_meta: pooling type = 0 llm_load_print_meta: rope type = 0 llm_load_print_meta: rope scaling = linear llm_load_print_meta: freq_base_train = 10000.0 llm_load_print_meta: freq_scale_train = 1 llm_load_print_meta: n_yarn_orig_ctx = 32768 llm_load_print_meta: rope_finetuned = unknown llm_load_print_meta: ssm_d_conv = 0 llm_load_print_meta: ssm_d_inner = 0 llm_load_print_meta: ssm_d_state = 0 llm_load_print_meta: ssm_dt_rank = 0 llm_load_print_meta: model type = 7B llm_load_print_meta: model ftype = Q8_0 llm_load_print_meta: model params = 7.24 B llm_load_print_meta: model size = 7.17 GiB (8.50 BPW) llm_load_print_meta: general.name = models llm_load_print_meta: BOS token = 1 '<s>' llm_load_print_meta: EOS token = 2 '</s>' llm_load_print_meta: UNK token = 0 '<unk>' llm_load_print_meta: PAD token = 0 '<unk>' llm_load_print_meta: LF token = 13 '<0x0A>' llm_load_tensors: ggml ctx size = 0.22 MiB llm_load_tensors: offloading 32 repeating layers to GPU llm_load_tensors: offloading non-repeating layers to GPU llm_load_tensors: offloaded 33/33 layers to GPU llm_load_tensors: ROCm0 buffer size = 7205.84 MiB llm_load_tensors: CPU buffer size = 132.82 MiB ................................................................................................... llama_new_context_with_model: n_ctx = 3900 llama_new_context_with_model: freq_base = 10000.0 llama_new_context_with_model: freq_scale = 1 llama_kv_cache_init: ROCm0 KV buffer size = 487.50 MiB llama_new_context_with_model: KV self size = 487.50 MiB, K (f16): 243.75 MiB, V (f16): 243.75 MiB llama_new_context_with_model: ROCm_Host input buffer size = 16.65 MiB llama_new_context_with_model: ROCm0 compute buffer size = 283.37 MiB llama_new_context_with_model: ROCm_Host compute buffer size = 8.00 MiB llama_new_context_with_model: graph splits (measure): 2 AVX = 1 | AVX_VNNI = 0 | AVX2 = 1 | AVX512 = 0 | AVX512_VBMI = 0 | AVX512_VNNI = 0 | FMA = 1 | NEON = 0 | ARM_FMA = 0 | F16C = 1 | FP16_VA = 0 | WASM_SIMD = 0 | BLAS = 1 | SSE3 = 1 | SSSE3 = 1 | VSX = 0 | MATMUL_INT8 = 0 | Model metadata: {'tokenizer.ggml.padding_token_id': '0', 'tokenizer.ggml.unknown_token_id': '0', 'tokenizer.ggml.eos_token_id': '2', 'general.architecture': 'llama', 'llama.rope.freq_base': '10000.000000', 'llama.context_length': '32768', 'general.name': 'models', 'llama.embedding_length': '4096', 'llama.feed_forward_length': '14336', 'llama.attention.layer_norm_rms_epsilon': '0.000010', 'llama.rope.dimension_count': '128', 'tokenizer.ggml.bos_token_id': '1', 'llama.attention.head_count': '32', 'llama.block_count': '32', 'llama.attention.head_count_kv': '8', 'general.quantization_version': '2', 'tokenizer.ggml.model': 'llama', 'general.file_type': '7'} Using fallback chat format: None 10:50:22.172 [INFO ] private_gpt.components.embedding.embedding_component - Initializing the embedding model in mode=huggingface 10:51:15.008 [INFO ] llama_index.core.indices.loading - Loading all indices. 10:51:15.301 [INFO ] private_gpt.ui.ui - Mounting the gradio UI, at path=/ 10:51:15.437 [INFO ] uvicorn.error - Started server process [50] 10:51:15.437 [INFO ] uvicorn.error - Waiting for application startup. 10:51:15.438 [INFO ] uvicorn.error - Application startup complete. 10:51:15.441 [INFO ] uvicorn.error - Uvicorn running on http://0.0.0.0:8080 (Press CTRL+C to quit)

Примеры настроек

Mistral

tokenizer: mistralai/Mistral-7B-Instruct-v0.2 llm_hf_repo_id: IlyaGusev/saiga_mistral_7b_gguf llm_hf_model_file: model-q8_0.gguf

LLaMA

tokenizer: TheBloke/Llama-2-13B-fp16 llm_hf_repo_id: IlyaGusev/saiga2_13b_gguf llm_hf_model_file: model-q8_0.gguf