- First of all, you can read this article in russian here.

One evening, I was reading Mastering regular expressions by Jeffrey Friedl , I realized that even if you have all the documentation and a lot of experience, there could be a lot of tricks developed by different people and imprisoned for themselves. All people are different. And techniques that are obvious for certain people may not be obvious to others and look like some kind of weird magic to third person. By the way, I already described several such moments here (in russian) .

For the administrator or the user the command line is not only a tool that can do everything, but also a highly customized tool that could be develops forever. Recently there was a translated article about some useful tricks in CLI. But I feel that the translator do not have enough experience with CLI and didn't follow the tricks described, so many important things could be missed or misunderstood.

Under the cut — a dozen tricks in Linux shell from my personal experience.

Note: All scripts and examples in the article was specially simplified as much as possible — so maybe you can find several of tricks looks completely useless — perhaps this is the reason. But in any case, share your minds in the comments!

1. Split string with variable expansions

People often use cut or even awk just to substract a part of string by pattern or with separators.

Also, many people uses substring bash operation using ${VARIABLE:start_position:length}, that works very fast.

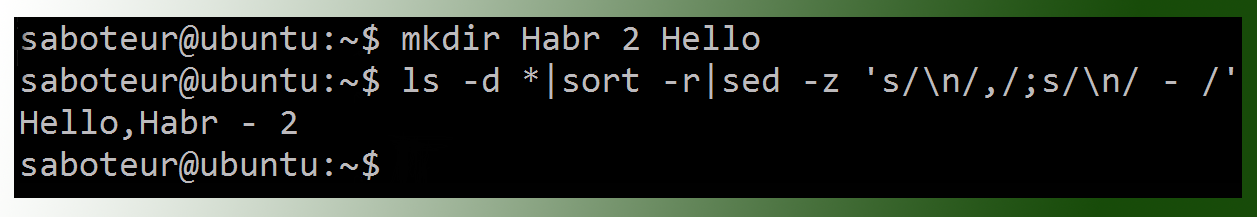

But bash provides a powerful way to manipulate with text strings using #, ##,% and %% — it called bash variable expansions.

Using this syntax, you can cut the needful by the pattern without executing external commands, so it will work really fast.

The example below shows how get the third column (shell) from the string where values separated by colon «username:homedir:shell» using cut or using variable expansions (we use the *: mask and the ## command, which means: cut all characters to the left until the last colon found):

$ STRING="username:homedir:shell" $ echo "$STRING"|cut -d ":" -f 3 shell $ echo "${STRING##*:}" shell

The second option does not start child process (cut), and does not use pipes at all, which should work much faster. And if you are using bash subsystem on windows, where the pipes barely move, the speed difference will be significant.

Let's see an example on Ubuntu — execute our command in a loop for 1000 times

$ cat test.sh #!/usr/bin/env bash STRING="Name:Date:Shell" echo "using cut" time for A in {1..1000} do cut -d ":" -f 3 > /dev/null <<<"$STRING" done echo "using ##" time for A in {1..1000} do echo "${STRING##*:}" > /dev/null done

Results

$ ./test.sh using cut real 0m0.950s user 0m0.012s sys 0m0.232s using ## real 0m0.011s user 0m0.008s sys 0m0.004s

The difference is several dozen times!

Of course, the example above is too artificial. In real example we will not work with a static string, we want to read a real file. And for 'cut' command, we just redirect /etc/passwd to it. In the case of ##, we have to create a loop and read file using internal 'read' command. So who will win this case?

$ cat test.sh #!/usr/bin/env bash echo "using cut" time for count in {1..1000} do cut -d ":" -f 7 </etc/passwd > /dev/null done echo "using ##" time for count in {1..1000} do while read do echo "${REPLY##*:}" > /dev/null done </etc/passwd done

Result

No comments =)$ ./test.sh $ ./test.sh using cut real 0m0.827s user 0m0.004s sys 0m0.208s using ## real 0m0.613s user 0m0.436s sys 0m0.172s

A couple more examples:

Extract the value after equal character:

$ VAR="myClassName = helloClass" $ echo ${VAR##*= } helloClass

Extract text in round brackets:

$ VAR="Hello my friend (enemy)" $ TEMP="${VAR##*\(}" $ echo "${TEMP%\)}" enemy

2. Bash autocompletion with tab

bash-completion package is a part of almost every Linux distributive. You can enable it in /etc/bash.bashrc or /etc/profile.d/bash_completion.sh, but usually it is already enabled by default. In general, autocomplete is one of the first convenient moments on Linux shell that a newcomer first of all meets.

But the fact that not everyone uses all of the bash-completion features, and in my opinion is completely in vain. For example not everybody knows, that autocomplete works not only with file names, but also with aliases, variable names, function names and for some commands even with arguments. If you dig into autocomplete scripts, which are actually shell scripts, you can even add autocomplete for your own application or script.

But let’s come back to the aliases.

You don't need to edit PATH variable or create files in specified directory to run alias. You just need to add them to profile or startup script and execute them from any place.

Usually we are using lowercase letters for files and directories in *nix, so it could be very comfortable to create uppercase aliases — in that case bash-completion will

$ alias TAsteriskLog="tail -f /var/log/asteriks.log" $ alias TMailLog="tail -f /var/log/mail.log" $ TA[tab]steriksLog $ TM[tab]ailLog

3. Bash autocompletion with tab — part 2

For more complicated cases, probably you would like to put your personal scripts to $HOME/bin.

But we have functions in bash.

Functions don't require path or separate files. And (attention) bash-completion works with functions too.

Let’s create function LastLogin in .profile (don't forget to reload .profile):

function LastLogin { STRING=$(last | head -n 1 | tr -s " " " ") USER=$(echo "$STRING"|cut -d " " -f 1) IP=$(echo "$STRING"|cut -d " " -f 3) SHELL=$( grep "$USER" /etc/passwd | cut -d ":" -f 7) echo "User: $USER, IP: $IP, SHELL=$SHELL" }

(Actually there is no important what this function is doing, it is just an example script which we can put to the separate script or even to the alias, but function could be better).

In console (please note that function name have an uppercase first letter to speedup bash-completion):

$ L[tab]astLogin User: saboteur, IP: 10.0.2.2, SHELL=/bin/bash

4.1. Sensitive data

If you put space before any command in console, it will not appears in the command history, so if you need to put plain text password in the command, it is a good way to use this feature — look into example below, echo «hello 2» will not appears in history:

$ echo "hello" hello $ history 2 2011 echo "hello" 2012 history 2 $ echo "my password secretmegakey" # there are two spaces before 'echo' my password secretmegakey $ history 2 2011 echo "hello" 2012 history 2

It is optional

It is usually enabled by default, but you can configure this behavior in the following variable:

export HISTCONTROL=ignoreboth

export HISTCONTROL=ignoreboth

4.2. Sensitive data in command line arguments

You want to store some shell scripts in git to share them across servers, or may be it is a part of application startup script. And you want this script will connect to database or do anything else which requires credentials.

Of course it is bad idea to store credentials in the script itself, because git is not secure.

Usually you can use variables, which was already defined on the target environments, and your script will not contain the passwords itself.

For example, you can create small script on each environments with 700 permissions and call it using source command from the main script:

secret.sh PASSWORD=LOVESEXGOD

myapp.sh source ~/secret.sh sqlplus -l user/"$PASSWORD"@database:port/sid @mysqfile.sql

But it is not secure.

If somebody else can login to your host, he can just execute ps command and see your sqlplus process with the whole command line arguments including passwords. So, secure tools usually should be able to read passwords/keys/sensitive data directly from files.

For example — secure ssh just even have no options to provide password in command line. But he can read ssh key from the file (and you can set secure permissions on ssh key file).

And non-secure wget have an option "--password" which allows you to provide password in command line. And all the time wget will be running, everybody can execute ps command and see password you have provided.

In additional, if you have a lot of sensitive data, and you want to control it from git, the only way is encryption. So you put to every target environment only master password, and all other data you can encrypt and put to git. And you can work with encrypted data from command line, using openssl CLI interface. Here is an example to encrypt and decrypt from command line:

File secret.key contains master key — a single line:

$ echo "secretpassword" > secret.key; chmod 600 secret.key

Lets use aes-256-cbc to encrypt a string:

$ echo "string_to_encrypt" | openssl enc -pass file:secret.key -e -aes-256-cbc -a U2FsdGVkX194R0GmFKCL/krYCugS655yLhf8aQyKNcUnBs30AE5lHN5MXPjjSFML

You can put this encrypted string to any configuration file stored in git, or any other place — without secret.key it is almost impossible to decrypt it.

To decrypt execute the same command just replace -e with -d:

$ echo 'U2FsdGVkX194R0GmFKCL/krYCugS655yLhf8aQyKNcUnBs30AE5lHN5MXPjjSFML' | openssl enc -pass file:secret.key -d -aes-256-cbc -a string_to_encrypt

5. The grep command

All should knows grep command. And be friendly with regular expressions. And often you can write something like:

tail -f application.log | grep -i error

Or even like this:

tail -f application.log | grep -i -P "(error|warning|failure)"

But don't forget that grep have a lot of wonderful options. For example -v, that reverts your search and shows all except «info» messages:

tail -f application.log | grep -v -i "info"

Additional stuff:

Option -P is very useful, because by default grep uses pretty outdated «basic regular expression:», and -P enables PCRE which even doesn't know about grouping.

-i ignores case.

--line-buffered parses line immediately instead of waiting to reach standard 4k buffer (useful for tail -f | grep).

If you know regular expression well, with --only-matching/-o you can really do a great things with cutting text. Just compare next two commands to extract myuser's shell:

$ grep myuser /etc/passwd| cut -d ":" -f 7 $ grep -Po "^myuser(:.*){5}:\K.*" /etc/passwd

The second command looks more compilcated, but it runs only grep instead of grep and cut, so it will take less time for execution.

6. How to reduce log file size

In *nix, if you delete the log file, that is currently used by an application, you can not just remove all logs, you can prevent application to write new logs until restart.

Because file descriptor opens not the file name, but iNode structure, and the application will continue to write to file descriptor to the file, which have no directory entry, and such file will be deleted automatically after application stop by file system(your application can open and close log file every time when it want to write something to avoid such issue, but it affects performance).

So, how to clear log file without deleting it:

echo "" > application.log

Or we can use truncate command:

truncate --size=1M application.log

Mention, that truncate command will delete the rest of the file, so you will lost the latest log events. Check another example how to store last 1000 lines:

echo "$(tail -n 1000 application.log)" > application.log

P.S. In Linux we have standard service rotatelog. You can add your logs to automated truncate/rotate or use existing log libraries which can do that for you (like log4j in java).

7. Watch is watching for you!

There is a situation when you are waiting for some event being finished. For example, while another user logins to shell (you continuously execute who command), or someone should copy file to your machine using scp or ftp and you are waiting for the completion (repeating ls dozens of times).

In such cases, you can use

watch <command>

By default, will be executed every 2 seconds with pre-clear of screen until Ctrl+C pressed. You can configure how often should be executed.

It is very useful when you want to watch live logs.

8. Bash sequence

There is very useful construct to create ranges. For example instead of something like this:

for srv in 1 2 3 4 5; do echo "server${srv}";done server1 server2 server3 server4 server5

You can write the following:

for srv in server{1..5}; do echo "$srv";done server1 server2 server3 server4 server5

Also you can use seq command to generate formatted ranges. For example we can use seq to create values whitch will be automatically adjusted by width (00, 01 instead of 0, 1):

for srv in $(seq -w 5 10); do echo "server${srv}";done server05 server06 server07 server08 server09 server10

Another example with command substitution — rename files. To get filename without extension we are using 'basename' command:

for file in *.txt; do name=$(basename "$file" .txt);mv $name{.txt,.lst}; done

Also even more short with '%':

for file in *.txt; do mv ${file%.txt}{.txt,.lst}; done

P.S. Actually for renaming the files you can try 'rename' tool which has a lot of options.

Another example — lets create structure for new java project:

mkdir -p project/src/{main,test}/{java,resources}

Result

project/ !--- src/ |--- main/ | |-- java/ | !-- resources/ !--- test/ |-- java/ !-- resources/

9. tail, multiple files, multiple users...

I have mentioned multitail to read files and watching multiple live logs. But it doesn't provided by default, and permissions to install something not always available.

But standard tail can do it too:

tail -f /var/logs/*.log

Also lets remember about users, which are using 'tail -f' aliases to watch application logs.

Several users can watch log files simultaneously using 'tail -f'. Some of them are not very accurate with their sessions. They could leave 'tail -f' in background for some reason and forget about it.

If the application was restarted, there are these running 'tail -f' processes which are watching inexistent log file can hang for several days or even months.

Usually it is not a big problem, but not neatly.

In case, if you are using alias to watch the log, you can modify this alias with --pid option:

alias TFapplog='tail -f --pid=$(cat /opt/app/tmp/app.pid) /opt/app/logs/app.log'

In that case, all tails will be automatically terminated when the target application will be restarted.

10. Create file with specified size

dd was one of the most popular tool to work with block and binary data. For example create 1 MB file filled with zero will be:

dd if=/dev/zero of=out.txt bs=1M count=10

But I recommend use fallocate:

fallocate -l 10M file.txt

On file systems, which support allocate function (xfs, ext4, Btrfs...), fallocate will be executed instantly, unlike the dd tool. In additional, allocate means real allocation of blocks, not creation a spare file.

11. xargs

Many people know popular xargs command. But not all of them use two following options, which could greatly, improve your script.

First — you can get very long list of arguments to process, and it could exceed command line length (by default ~4 kb).

But you can limit execution using -n option, so xargs will run command several times, sending specified number of arguments at a time:

$ # lets print 5 arguments and send them to echo with xargs: $ echo 1 2 3 4 5 | xargs echo 1 2 3 4 5 $ # now let’s repeat, but limit argument processing by 3 per execution $ echo 1 2 3 4 5 | xargs -n 3 echo 1 2 3 4 5

Going ahead. Processing long list could take a lot of time, because it runs in a single thread. But if we have several cores, we can tell xargs to run in parallel:

echo 1 2 3 4 5 6 7 8 9 10| xargs -n 2 -P 3 echo

In the example above, we tell xargs to process list in 3 threads; each thread will take and process 2 arguments per execution. If you don't know how many cores you have, lets optimize this using "nproc":

echo 1 2 3 4 5 6 7 8 9 10 | xargs -n 2 -P $(nproc) echo

12. sleep? while? read!

Some time you need to wait for several seconds. Or wait for user input with read:

read -p "Press any key to continue " -n 1

But you can just add timeout option to read command, and your script will be paused for specified amount of seconds, but in case of interactive execution, user can easily skip waiting.

read -p "Press any key to continue (auto continue in 30 seconds) " -t 30 -n 1

So you can just forget about sleep command.

I suspect that not all my tricks looks interesting, but it seemed to me that a dozen are a good number to fill out.

At this I say goodbye, and I will be grateful for participating in the survey.

Of course feel free to discuss the above and share your cool tricks in the comments!

Only registered users can participate in poll. Log in, please.

Did you find something new?

44.44%Was surprised with ## and %%4

33.33%Fun thing to use read instead of sleep3

33.33%Didn't know about openssl useful CLI interface3

44.44%More than a half was useful!4

22.22%All talk and no trousers...2

9 users voted. 2 users abstained.