In this article, I will tell you about a-few-years journey of scaling the Elasticsearch cluster in production environment, which is one of the vital elements of the iPrice technology stack. I will describe challenges we encountered and how we approached them.

The iPrice e-commerce ecosystem has grown substantially since its initial release in 2014. The first implementation of our Elasticsearch (ES) architecture would not be able to handle the amount of index and search requests that are used nowadays. It served us well when the dataset was relatively small, a few tens of gigabytes. The rapid growth of the product catalogue and the volume of traffic itself made us revise the architecture and follow the best practices. The aim of this paper is to reveal the technical details and to argue why particular steps were taken to solve the challenges arose.

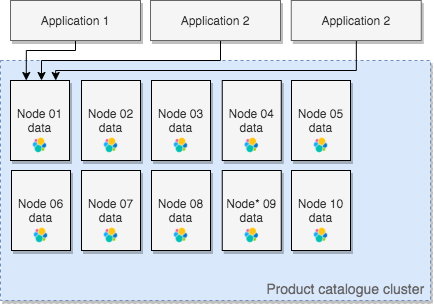

The first version of the cluster architecture was much simpler comparing to what it is today. The cluster was a collection of a few nodes. As it usually happens in a start-up environment, we kept postponing the task to add dedicated master nodes. It was running well until the point when we faced a couple of incidents.

While we ingest a huge number of products to the cluster (a daily operation), one of our data nodes, elected as master, was occasionally running out of memory. This forced other nodes to elect the new master, primary shards allocated on failed node stopped being available, the cluster state turned to red. Most searches were simply timing out. Obviously, such behaviour is not acceptable in production environment.

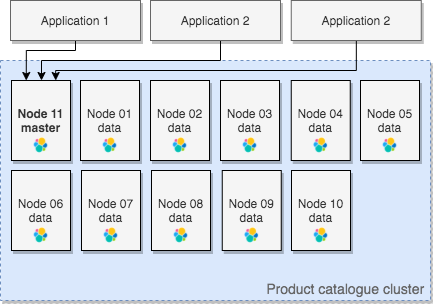

To address this problem, a dedicated master node was added. We managed to stabilise the cluster and the above problem was solved.

Since the ES cluster was a collection of EC2 instances the applications used the master node IP address as the only cluster endpoint. It was against the best practice, and we made the decision to refactor Elasticsearch connectors in our applications and add multi-node support. The SimpleConnectionPool with RoundRobin as the default selector. The responsibility of distributing requests between data nodes fell on the applications shoulders.

Infrastructure and performance monitoring

From the very beginning, the monitoring tools in place were NewRelic Infrastructure monitoring solution together with CloudWatch AWS service. The NewRelic infrastructure agent, installed on every host, collects and publishes infrastructure metrics. It makes it easy to observe a node’s resource utilisation, spot anomalies in the NewRelic One dashboard, as well as configure thresholds for certain metrics and set alerts respectively.

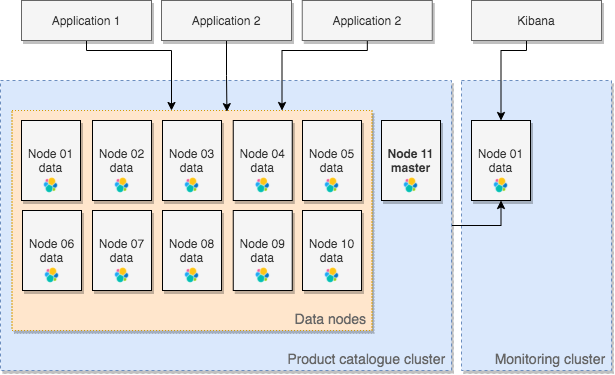

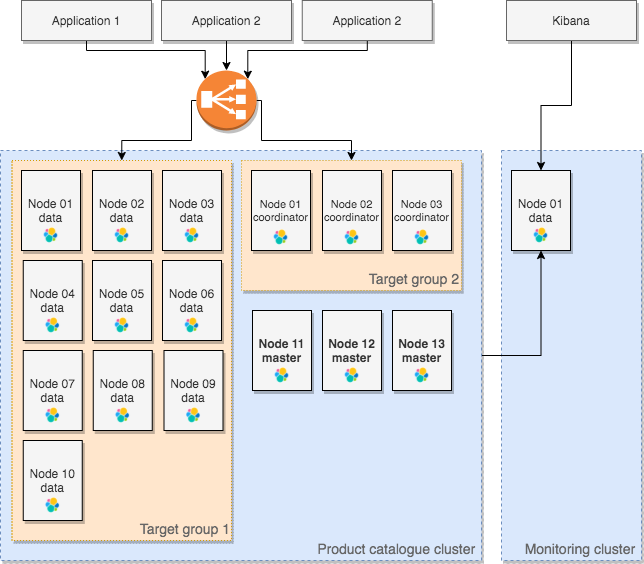

Aside from the infrastructure metrics, there was no visibility of some important cluster metrics. Accessing the _cat and _cluster APIs are not very convenient, especially when the cluster is experiencing stability issues. At this moment we finally introduced a dedicated single-node monitoring cluster for the purpose of monitoring product catalogue cluster.

The Kibana instance of the monitoring cluster gave us visibility of search and indexing rates, latencies, easier access to the list of open indices, nodes and allocated shards. Shard activity section, displaying any shard movements between nodes, helped us quickly understand what is going on in the cluster.

Stabilising the cluster

The next significant phase in the evolution was related to the natural growth of the product catalogue, specifically the size of the data volume and the number of applications interacting with the cluster. The first data node started becoming unstable. After some research, we found out that the network interface of this node had begun rejecting packets whenever one of internal applications was performing some operations with large datasets.

Moreover, all search queries, coming from PHP-FPM applications, were hitting the first node. When an FPM worker processes the request, it creates, maintains, and destroys the connection once processing is completed. The RoundRobin selector always returns the first node in this case. The sniffingConnectionPool negatively impacts performance despite sniffing is relatively lightweight operation. Lack of ready-to-use solution that allows to share a connection pool across FPM processes would have forced us developing and maintaining our own connection pool.

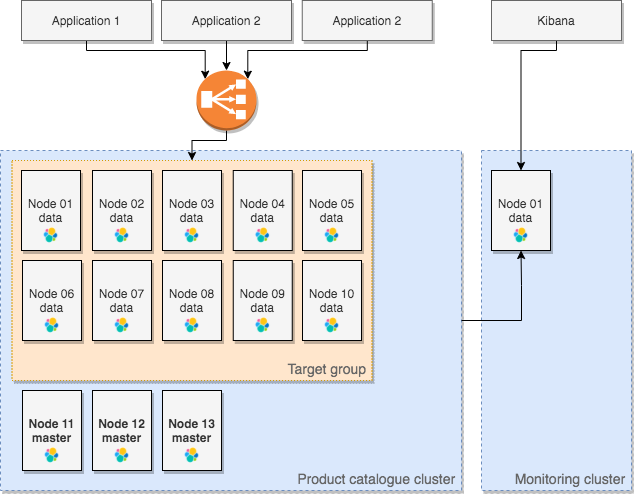

Taking above into consideration, we made the decision to introduce a load balancer and take out the responsibility to distribute queries between nodes from the application level.

Beside the primary goal to distribute requests between data nodes, presence of an application load balancer addresses a few more things:

Help making sure connections cannot be established with individual nodes. Security group rules of the nodes allow only traffic from themselves and load balancer’s subnets.

Log all requests to S3 bucket that can be further analysed using Athena service.

Maintain the cluster anytime without needs to modify applications configurations. Whenever we need to increase or decrease the cluster capacity or replace individual nodes, we do not need to turn the maintenance mode on.

Use simplest staticNoPingConnectionPool to allow applications establishing the connection as fast as possible.

Moving forward we paid attention to the fact that in case of any outage of the only one master node, the cluster availability will still suffer. Three devoted master-eligible nodes provide the ability to safely form a quorum if one of these nodes fails.

Even though we ingest large number of products to the cluster at a time when client applications are receiving lowest amount of traffic, performance of search requests is still impacted. To reduce the correlation between high latencies of search requests and heavy indexing requests we placed nodes that perform coordinator's role.

Coordinating nodes receive search requests and forward them to the data nodes which hold the data. Data nodes execute the request locally and return the results to the coordinating node. Coordinating nodes helped to cut down latencies of search requests substantially.

Storage tuning

Since we host our workloads in Amazon cloud, data nodes hold shards on EBS volumes. In the beginning, one TB sized volumes performed well with 3000 IOPS and maximum throughput of 250 MB/s.

At some point, we began noticing that Elasticsearch nodes hit these limits from time to time, especially when internal applications had been ingesting data and running heavy aggregations. Maintaining the window to import fresh product catalogue daily within an hour is one of the highest priorities. Taking into consideration high cost of volumes with provisioned IOPS, we were lucky to receive announcement from AWS introducing GP3 type, the next generation of EBS volumes. It allowed us to shrink disks slightly to pay less for storage, and boost IOPS and throughput to 4000 and 300MB/s respectively.

These optimisations drastically reduced latencies of search requests (the spikes in the graphs below) at the time of heavy indexing and lowered the duration of the recurring job to import the product catalogue.

Summary

There were lots of other steps we went through to optimise configuration for heavy indexing as well as heavy aggregations. These include optimisations of indices mappings, shards settings and document fields, optimisations on the application level, such as bulk requests, multithreading, managing replicas size and many more. The adjusts are outside the scope of this writing.

It is worth mentioning that we do not stop at this point and keep evolving our Elasticsearch service. A couple of significant points to achieve in near future include following:

Upgrade the stack to the latest version 8.0,

Introduce dedicated data nodes for the intensive data streams.

Core services continuously change in response to new demands and conditions, including user requests for new features, evolving system dependencies and technology upgrades.

As mentioned in the beginning, there were not any service reliably standards defined since we operate in start-up environment. We naturally built the learning path while operating the service, responding to incidents, conducting postmortems and analysing root causes. Based on our experience, the learning path was not easy. There are always stumbling blocks along the road, and we hope that by sharing our experience about implementing Elasticsearch, you do not have to stumble over them yourself.