In the first part of You don't know Redis, I built an app using Redis as a primary database. For most people, it might sound unusual simply because the key-value data structure seems suboptimal for handling complex data models.

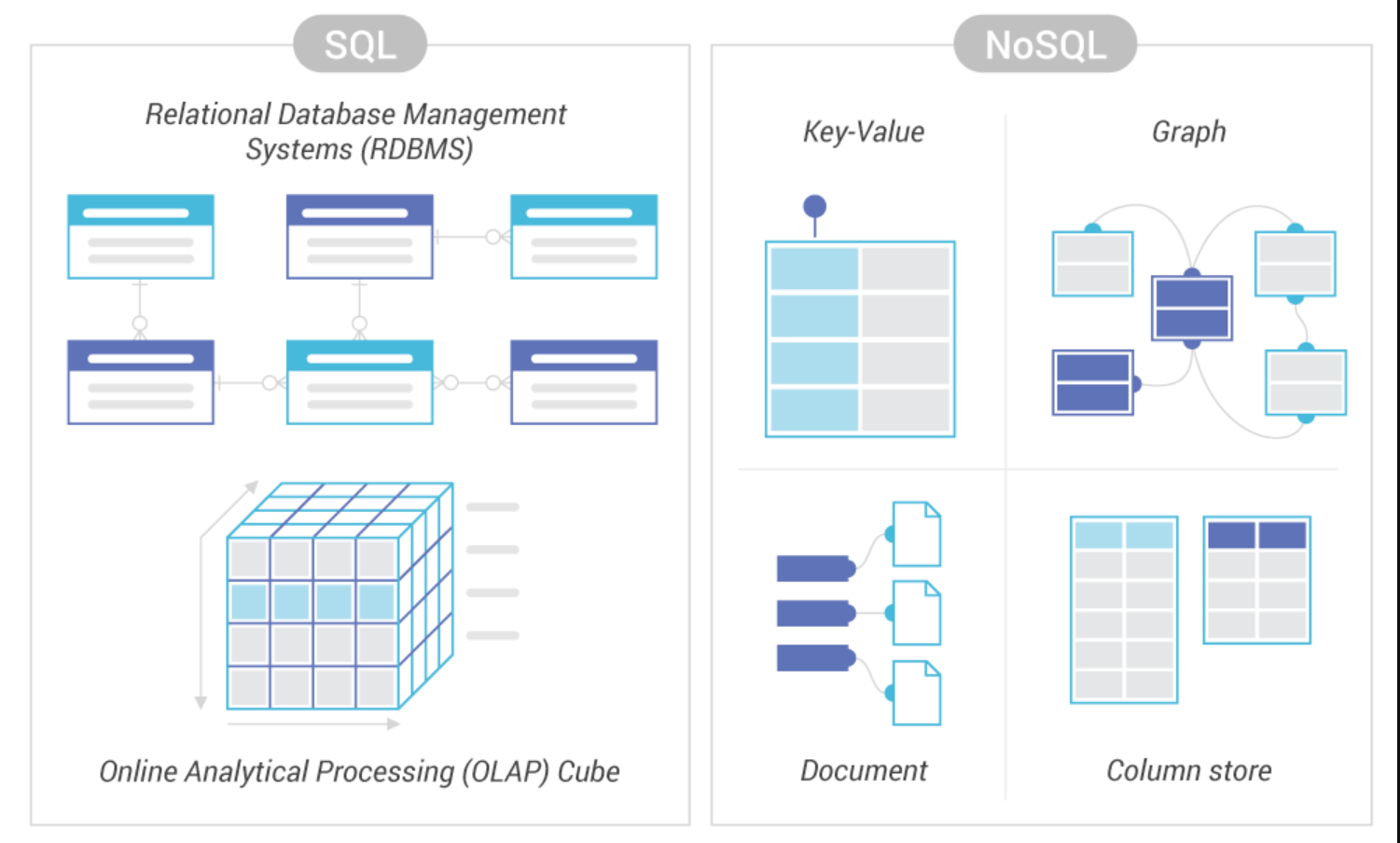

In practice, the choice of a database often depends on the application’s data-access patterns as well as the current and possible future requirements.

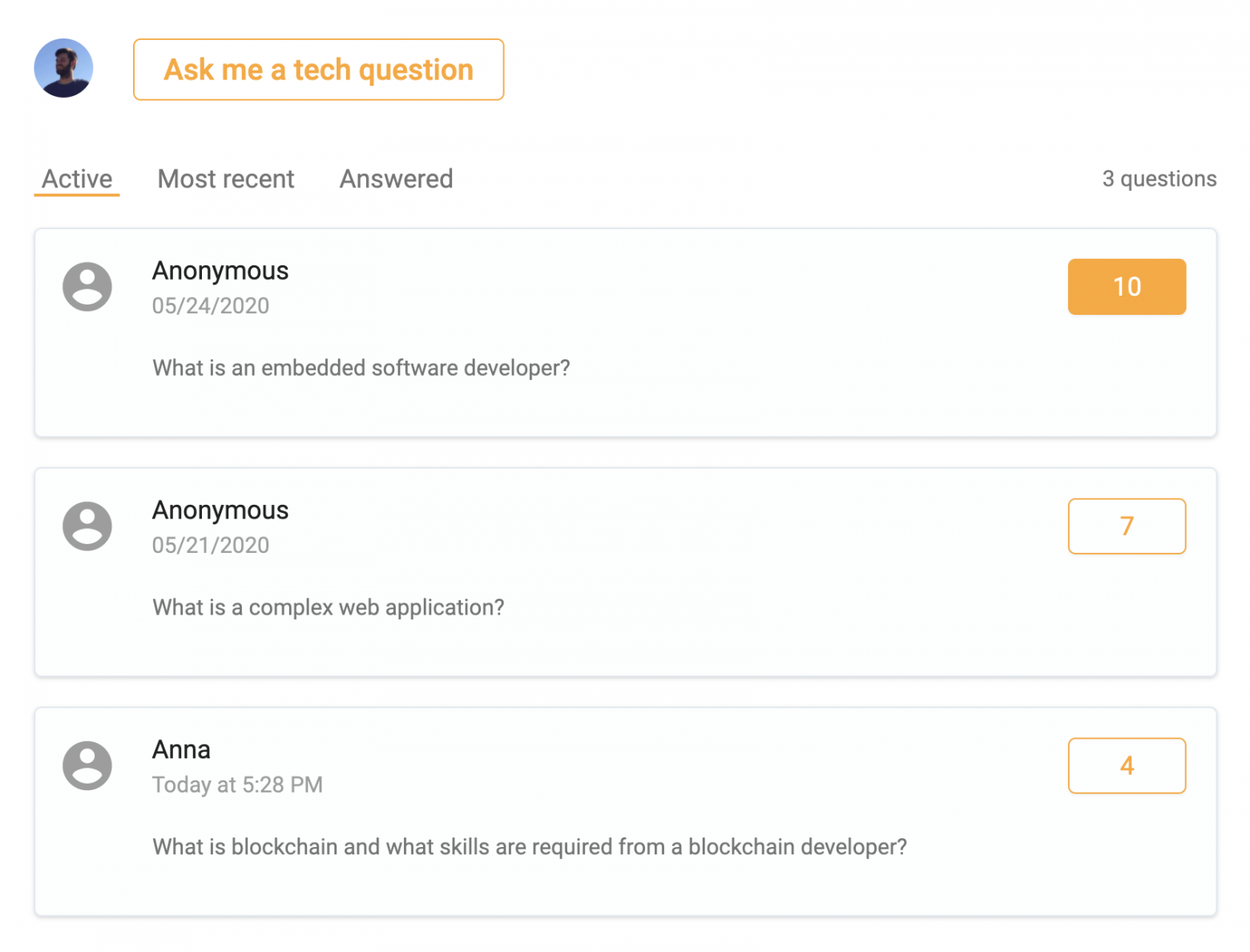

Redis was a perfect database for a Q&A board. I described how I took advantage of sorted sets and hashes data types to build features efficiently with less code.

Now I need to extend the Q&A board with registration/login functionality.

I will use Redis again. There are two reasons for that.

Firstly, I want to avoid the extra complexity that comes with adding yet another database.

Secondly, based on the requirements that I have, Redis is suitable for the task.

Important to note, that user registration and login is not always about only email and password handling. Users may have a lot of relations with other data which can grow complex over time.

Despite Redis being suitable for my task, it may not be a good choice for other projects.

Always define what data structure you need now and may need in the future to pick the right database.