Contest

Today I would like to discuss the games Chess and Go, the world's champions, algorithms and Al.

In 1997, a computer program developed by IBM Deep Blue defeated the world Chess champion Garry Kasparov. Go remained the last board game in which humans were still better than machines.

Why is that?

Chess is primarily distinguished from Go by the number of variations for each move. Chess, the game is more predictable with more structured rules: we have value for each figure (e.g bishop = 3 pawns, rook = 5 pawns -> rook > bishop), some kind of openings and strategies. Go, in turn, has incredibly simple rules, which creates the complexity of the game for the machine. Go is one of the oldest board games. Until recently, it was assumed that a machine was not capable of playing on an equal footing with a professional player due to the high level of abstraction and the inability to sort through all possible scenarios - exactly as many valid combinations in a game on a standard 19×19 go-ban are 10180 (greater than the number of atoms in the visible universe).

However, almost 20 years later, in 2015, there was a breakthrough. Google's Deep Mind company enhanced AlphaGo, which was the last step for the computer to defeat the world champions in board games. The AlphaGo program defeated the European champion and then, in March 2016 demonstrated a high level of play by defeating Lee Sedol, one of the strongest go players in the world, with a score of 4:1 in favour of the machine. A year later, Google introduced to the world a new version of AlphaGo - AlphaGoZero.

Deep Blue's Murray Campbell called AlphaGo's victory "the end of an era... board games are more or less done and it's time to move on."

Differences in algorithms

Alpha Go = Monte-Carlo Tree Search Algorithm & Databases

I. Policy Network:

Trained on high-level games to imitate those players (150.000 games).

II. Value Network:

It can evaluate the board positions and say what is the probability in this particular position.

(To reduce the size of the tree [10360], we can compare the probabilities to win in each particular position and cut off brunches with the worst probabilities)

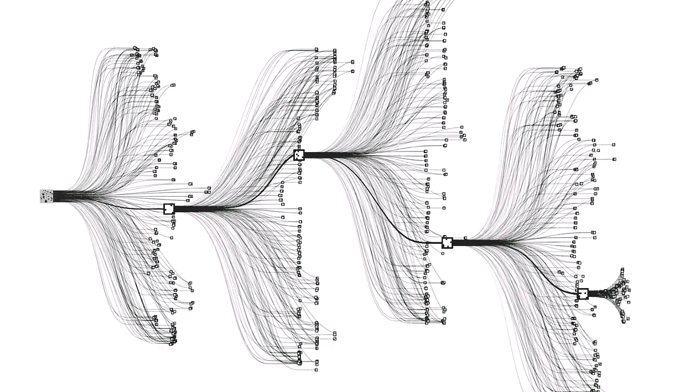

III. Tree search:

It will look through different variations of the game and try to figure out what will happen in the future.

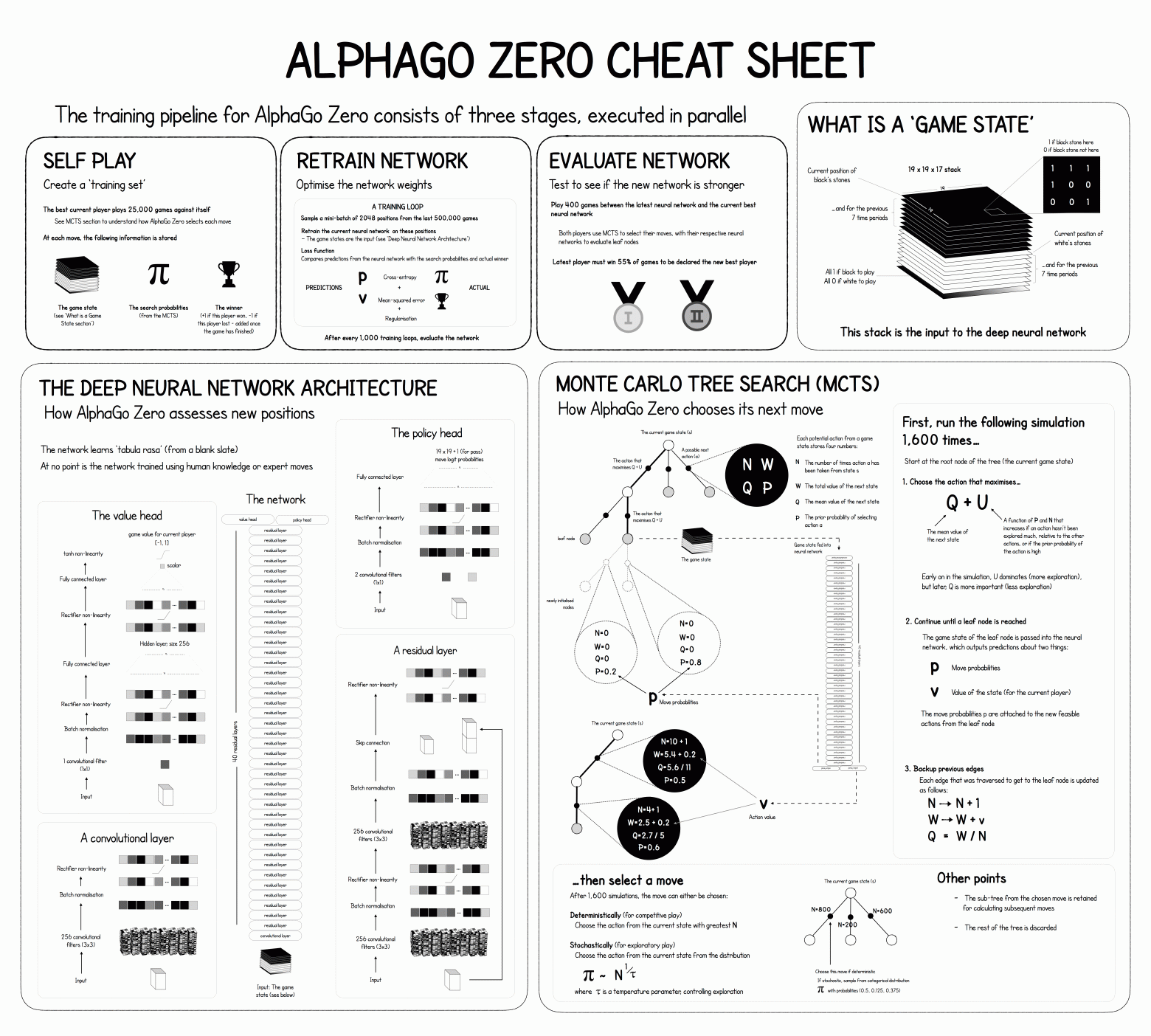

Alpha Go Zero = reinforcement learning

I.Self-trained

It is self-trained without the human domain knowledge and no pre-training with human games.

II. Retrain network

Optimise the network weights

III. Evaluate Network

Test to see, if new network is stronger

Ironically, Alpha Go Zero has only one deep network, and it only takes 3 days of practice to outperform AlphaGo, which requires 6 weeks of training. Alpha Go Zero has surpassed its predecessor multiple times and beat AlphaGo with a crushing score of 100:0.

Impact on various human beings

Lee Sedol, after playing with Alpha Go, set off on tour for two months, where he never lost to a single person!

People realized that Al can now cope with tasks that were considered exclusively human. Creativity and critical thinking are an indispensable part of the game Go.

What's facinating about it?

Development of training approaches: AlphaGo was trained using mostly expert data from a human, while AlphaGo Zero exclusively through independent play without any data from a human. The switch from relying on human knowledge to learning exclusively from ground up has proven the power of reinforcement learning and its ability to exceed human performance.

Superior performance: Alpha Go Zero's galie-based approach to self-learning let it find groundbreaking strategies that were not even considered by human-experts. This highlighted the potential of Al in expanding the boundaries of human knowledge and productivity in solving complex problems.

Effective way of learning for Al: models learn directly from data without overt human guidance or labeling.

Open Questions

What seemed to me the most interesting is the fact that with less human intervention, Al shows better results. We can observe this with the example of AlphaGo and AlphaGo Zero. Undoubtedly, the performance is very demonstrative. People look through the prism of their own biological perception, and shift their vision of learning to Al. As a biological being, man is very imperfect, so this model can be very doubtful. So perhaps in order to come up with an ideal Al, we need to step back from our human comprehension of learning? For a perfect algorithm that the machine will understand, you need as little human supervision as possible? Will this lead us to lose control?

Nevertheless, being the last undefeated game is worth a lot!