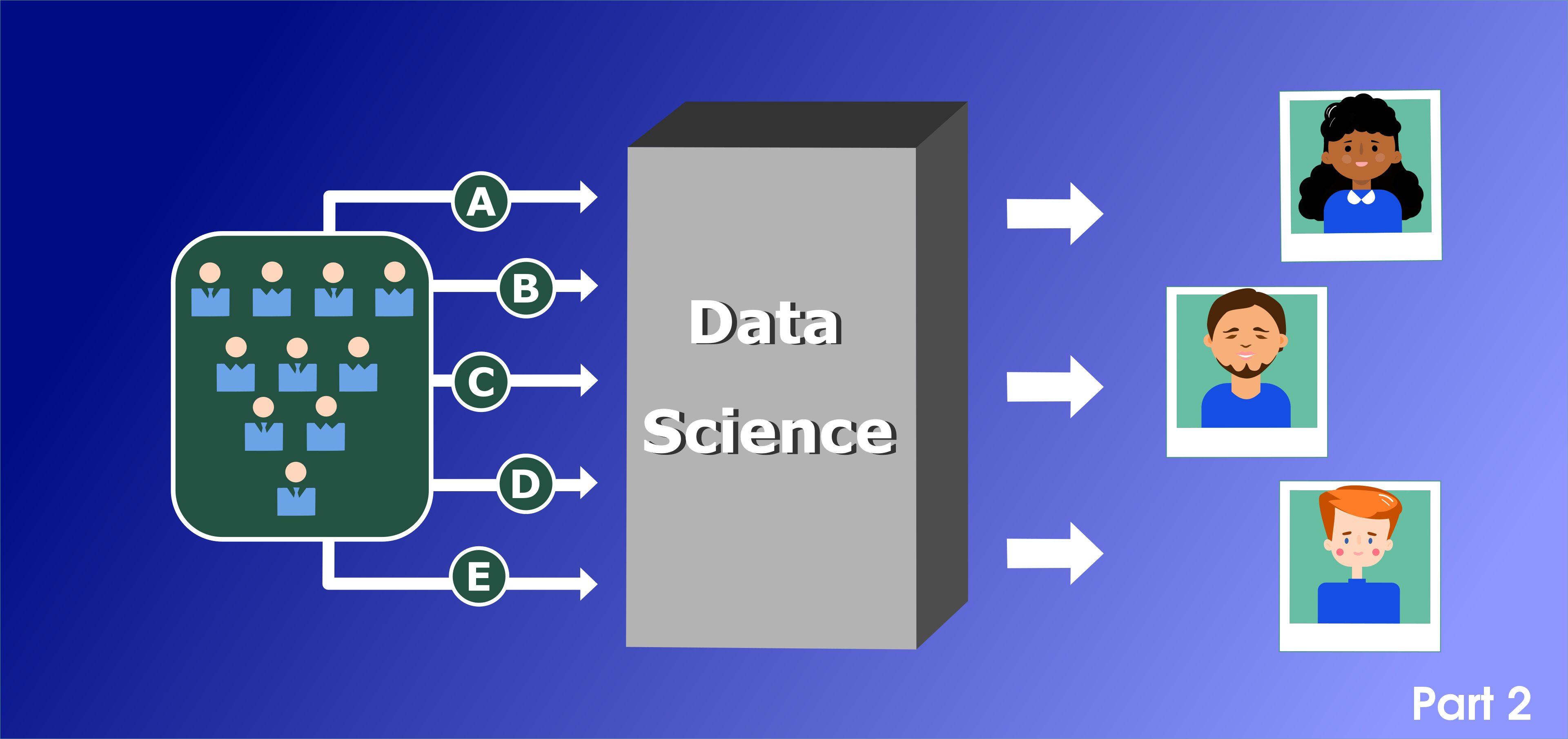

Machine Learning CPython library 'VKF'

The basis of artificial intelligence

When Alex Garland’s series Devs (on FX and Hulu) came out this year, it gave developers their own sexy Hollywood workup. Who knew that coders could get snarled into murder plots and love triangles just for designing machine learning programs? Or that their software would cause a philosophical crisis? Sure, the average day of a developer is more code writing than murder but what a thrill to author powerful new program.

If science were a dating app, quantum physics and machine learning probably wouldn’t be a match. They’re from completely different fields and often require completely different backgrounds and skills. But, throw in a little quantum computing and, suddenly, that science-matchmaking app becomes Tinder and the attraction between the two is palpable.

Hello, Habr!

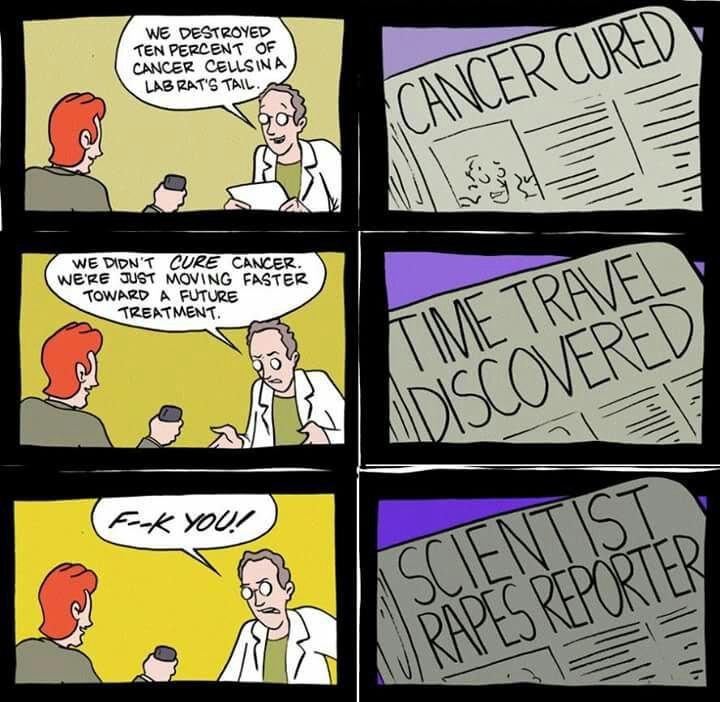

About a month ago, I had a feeling of constant anxiety. I began to eat poorly, sleep even worse, and constantly read to a ton of news about the pandemic. Based on them, the coronavirus either captured, or liberated our planet, was either a conspiracy of world governments, or the vengeance of the pangolin, the virus either threatened everyone at once, or personally me and my sleeping cat…

Hundreds of articles, social media posts, youtube-telegram-instagram-tik-tok (yes, I sin) content of varying degrees of content quality did not lead me to anything but an even greater sense of anxiety.

But one day I bought buckwheat decided to end it all. As soon as possible!

Photo by Dugan Arnett on Boston Globe

Are you still looking for a new flat? Ready to make the last attempt? If so - follow me and I show you how to reach the finish line.