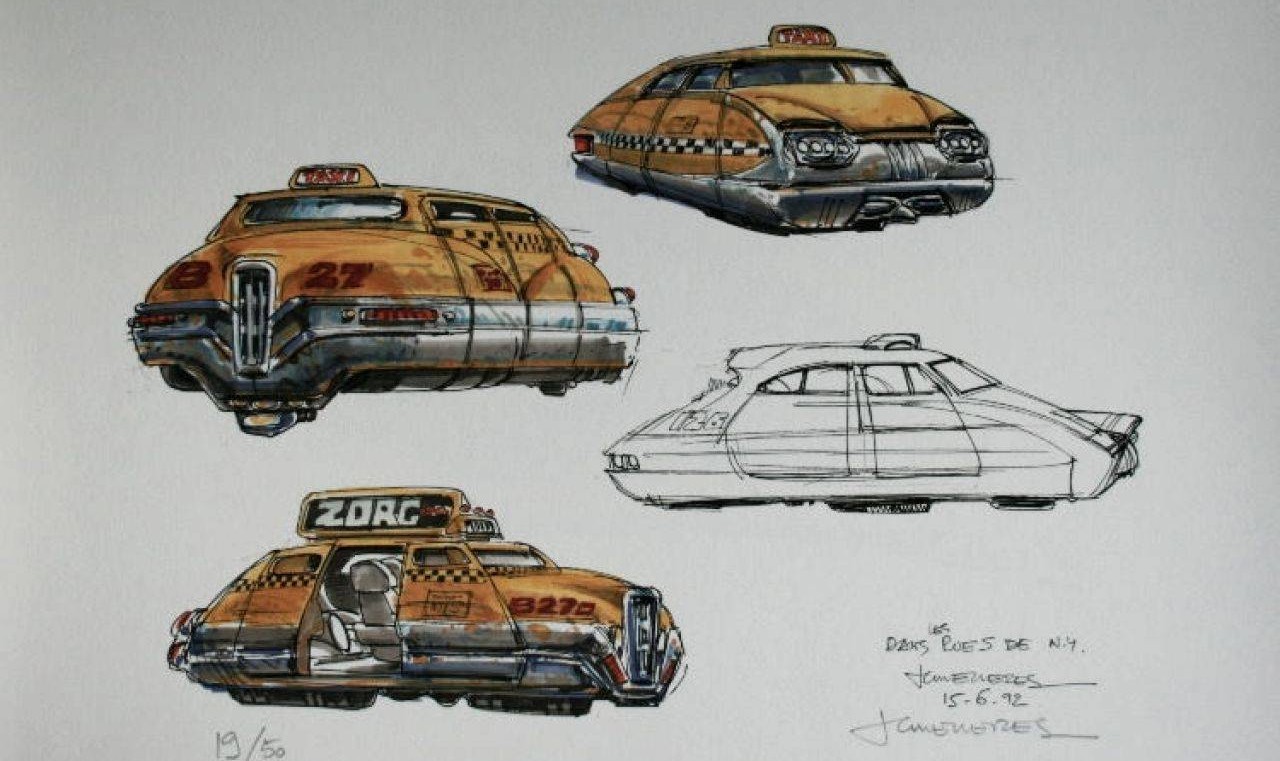

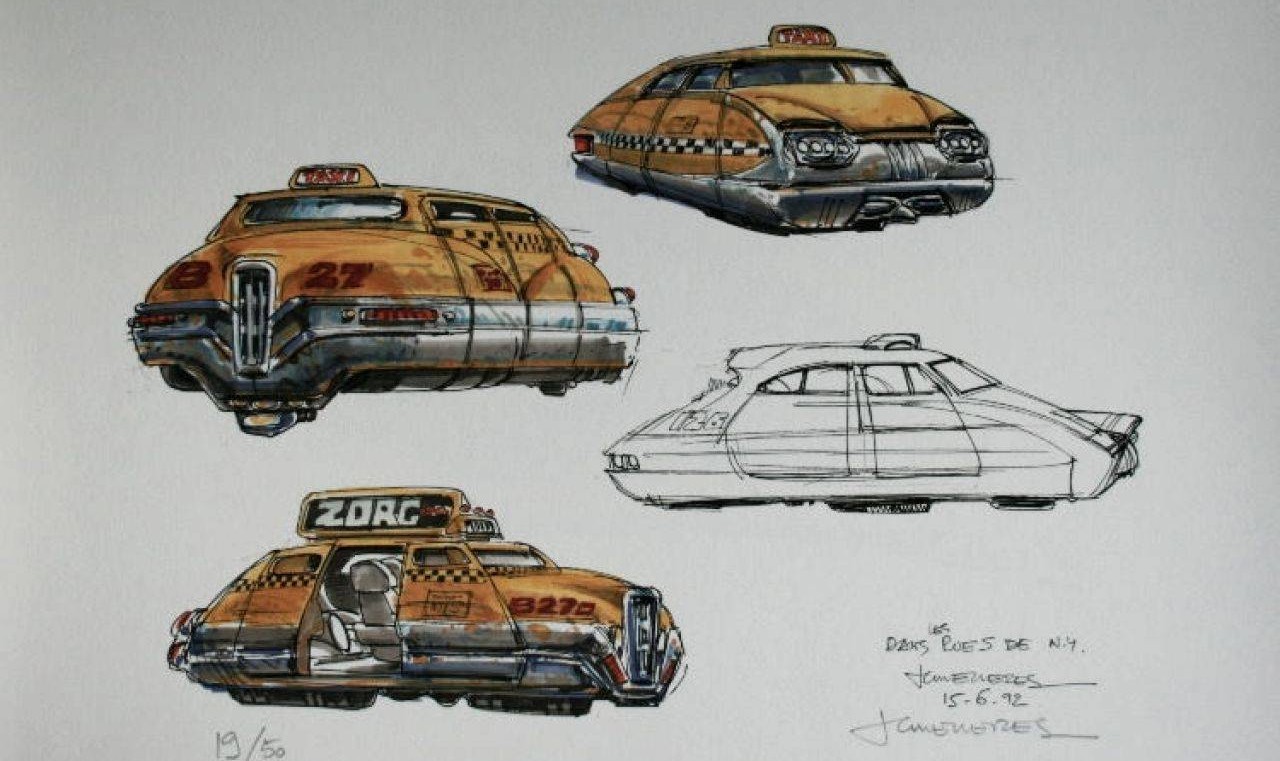

(

Jean-Claude Mézières)

Translation provided by ChatGPT,

link to the original article in Russian

Link to Part 1: «Preliminary Analysis» (

ру /

eng )

Link to Part 2: «Experiments on a Torus» (

ру /

eng )

Link to Part 3: «Practically Significant Solutions» (

ру /

eng )

Link to «Summary» (

ру /

eng )

1. About this series of articles

1.1 Central result

If I haven't made a critical mistake, I have discovered an astonishing passenger transportation scheme with unique characteristics. Imagine this scenario: you are in a big city and need to get from point A to point B. All you need to do is walk to the nearest intersection and indicate the destination on your smartphone or a special terminal installed there. In a few minutes, a small but spacious bus will arrive for you. The bus is designed for easy entry without bending, and you can bring a stroller, bicycle, or even a cello inside. It provides comfortable seating where you can stretch your legs. This bus will take you to the nearest intersection to point B, and you will reach your destination without any transfers. The entire journey, including waiting at the stop, will take only 25-50% more time than if you were traveling by private car. Based on my estimation, in modern metropolises, this type of transportation will be widely adopted, and the cost of a trip on such buses will be similar to the fare of a regular city bus.

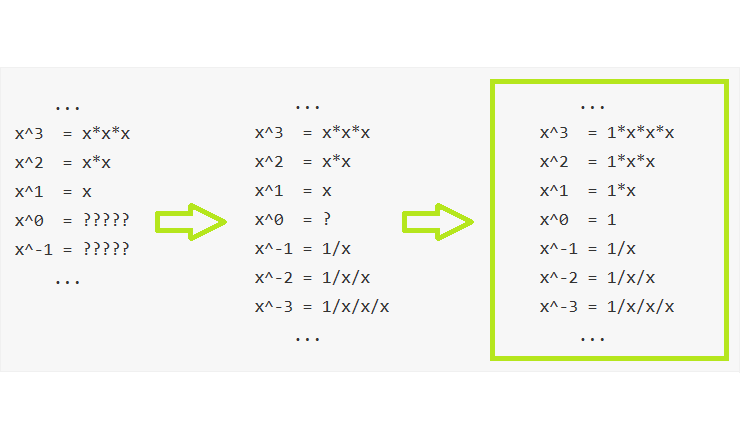

Surprisingly, the reasoning behind these findings is based on relatively simple mathematics, and perhaps even a talented high school student, under fortunate circumstances, could have guessed them on their own. The practical significance of the topic and the modest level of mathematical requirements prompted me to make an effort to write the article in such a way that the reader could follow the path of discoveries, learn some research techniques, and gain a successful example to explain to their children the purpose of mathematics and how it can be applied in everyday life.