SpesLab-Gambit: a convenient neural network object annotation program for video surveillance systems

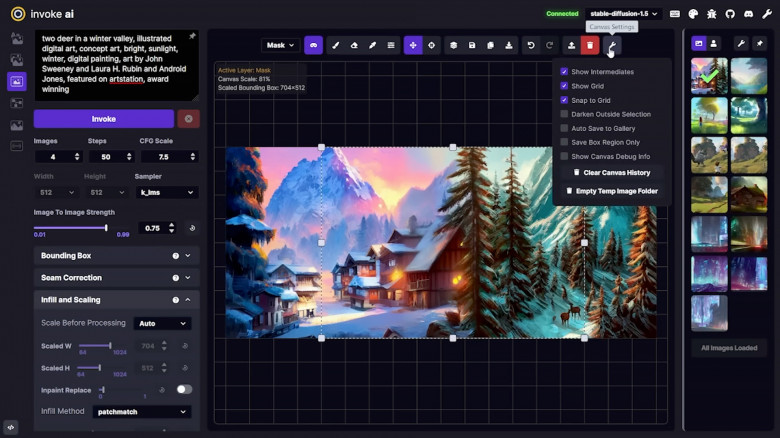

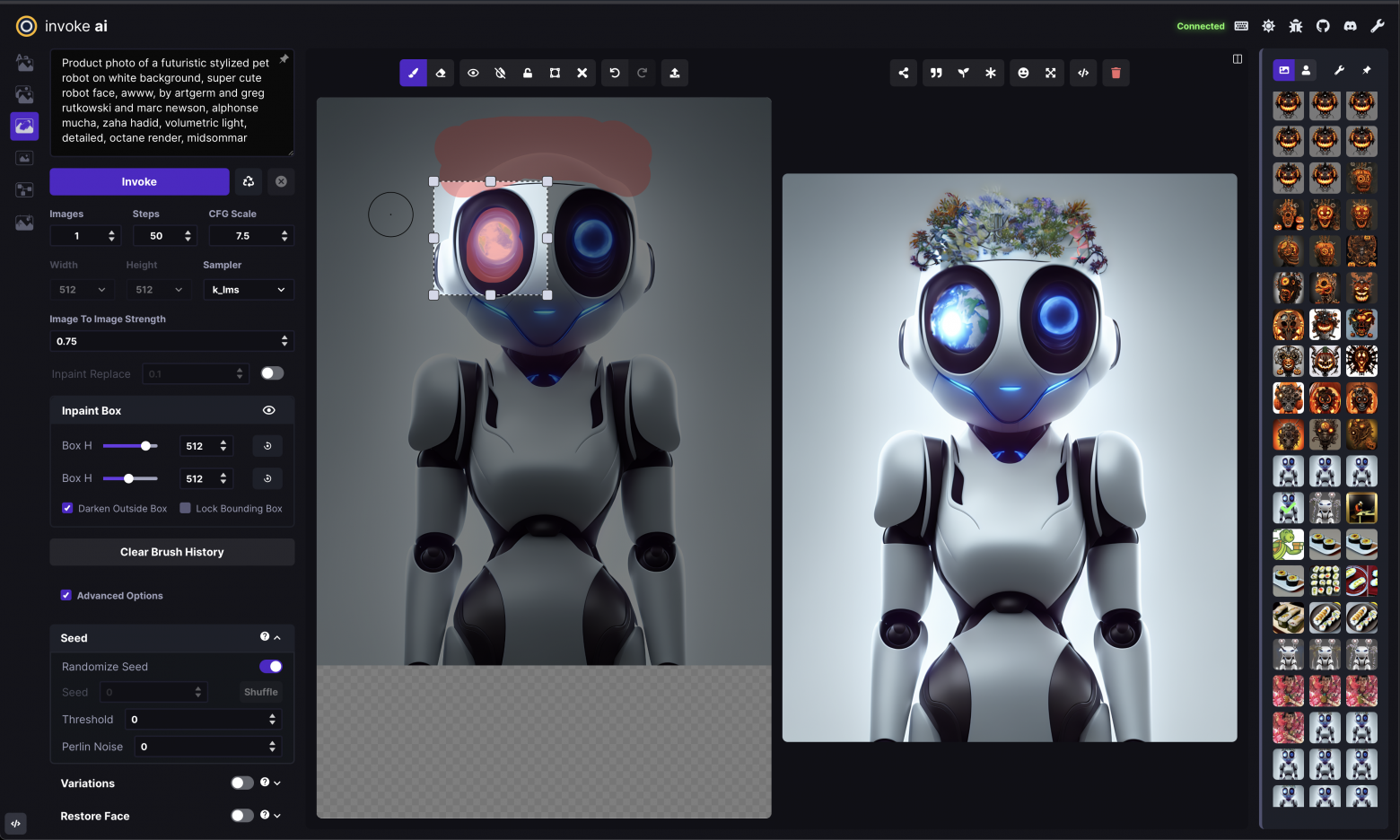

Developers of smart cameras, smart DVRs, and neural-network video analytics for surveillance systems need AI models capable of operating in real-world street conditions. Out there, nobody walks around with professional cameras, carefully adjusts angles, sets up lighting, records without compression, or follows the common sense taught in cinematography textbooks.

Of course, Gambit can be used for many other tasks, but its main focus is the convenient collection of material FROM video surveillance systems and dataset annotation specifically FOR video surveillance neural networks.

Gambit is not designed for polished photos and Internet reels. Quite the opposite — it is intended for low-quality surveillance archive footage. At SpesLab, we call this kind of content “wild.”

It would seem that the question of the color of the Moon and the Sun from space for modern science is so simple that in our century there should be no problem at all with the answer. We are talking about colors when observing precisely from space, since the atmosphere causes a color change due to Rayleigh light scattering. «Surely somewhere in the encyclopedia about this in detail, in numbers it has long been written,» you will say. Well, now try searching the Internet for information about it. Happened? Most likely no. The maximum that you will find is a couple of words about the fact that the Moon has a brownish tint, and the Sun is reddish. But you will not find information about whether these tints are visible to the human eye or not, especially the meanings of colors in RGB or at least color temperatures. But you will find a bunch of photos and videos where the Moon from space is absolutely gray, mostly in photos of the American Apollo program, and where the Sun from space is depicted white and even blue.

It would seem that the question of the color of the Moon and the Sun from space for modern science is so simple that in our century there should be no problem at all with the answer. We are talking about colors when observing precisely from space, since the atmosphere causes a color change due to Rayleigh light scattering. «Surely somewhere in the encyclopedia about this in detail, in numbers it has long been written,» you will say. Well, now try searching the Internet for information about it. Happened? Most likely no. The maximum that you will find is a couple of words about the fact that the Moon has a brownish tint, and the Sun is reddish. But you will not find information about whether these tints are visible to the human eye or not, especially the meanings of colors in RGB or at least color temperatures. But you will find a bunch of photos and videos where the Moon from space is absolutely gray, mostly in photos of the American Apollo program, and where the Sun from space is depicted white and even blue.